This Chinese AI Breakthrough Could Change How LLMs Are Built

What it is

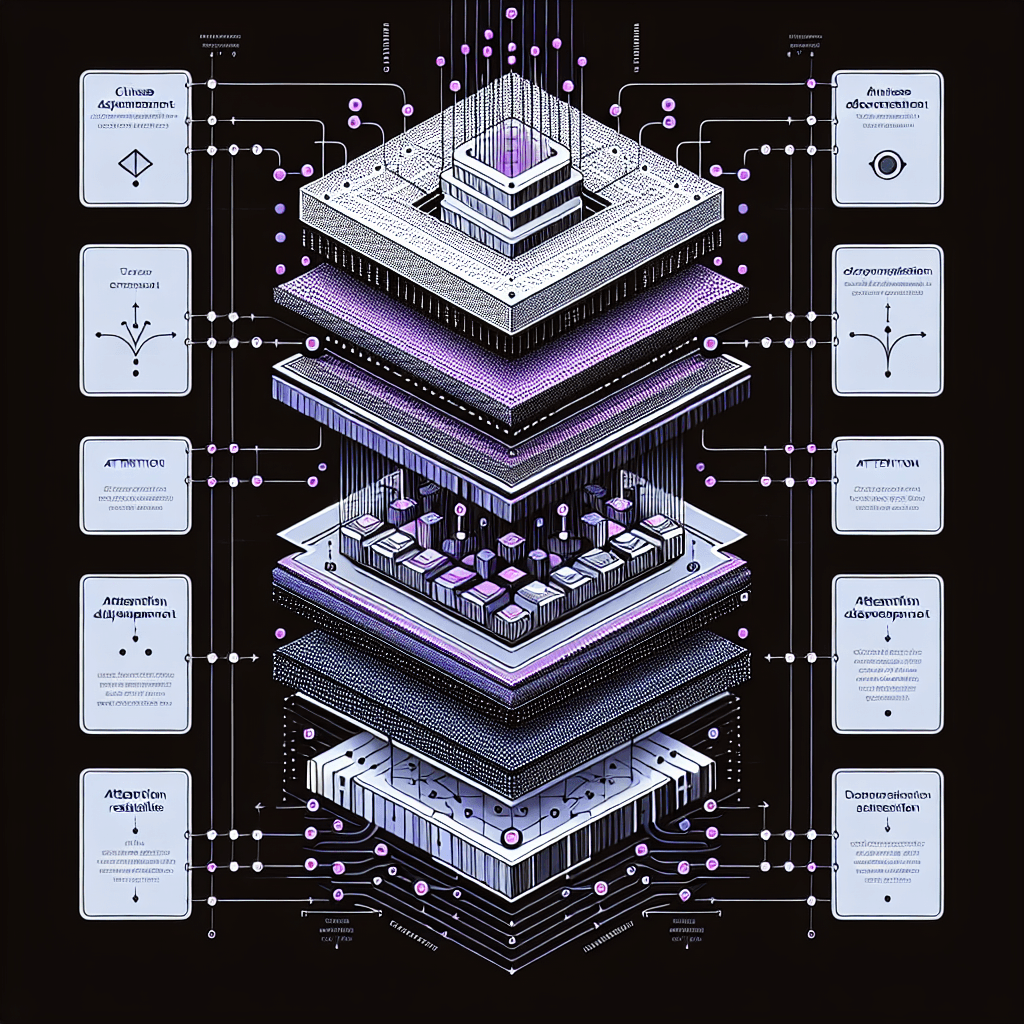

Picture a relay race where runners fumble the baton at every handoff. Attention Residuals fixes how information flows between transformer layers—the fundamental building blocks of GPT-4, Claude, and every modern LLM. Instead of forcing each layer to reconstruct context from scratch, the method preserves critical signals across the network.

Why it matters

If this works at scale, future models get faster and cheaper to run without architectural overhauls. For developers: watch for this technique in open-source implementations. For users: expect better performance from existing model sizes. China's AI research continues punching above its weight in fundamental architecture work.

Key details

- •Published by Moonshot AI, the Beijing-based team behind Kimi chatbot (a GPT-4 competitor in China)

- •Paper titled 'Attention Residuals' — focuses on inter-layer information flow in transformers

- •Addresses inefficiency in how current LLMs propagate context through deep networks

- •Potential for retrofit: may improve existing models without full retraining

- •Joins recent Chinese contributions like DeepSeek's mixture-of-experts work

Worth watching

4:25:13

4:25:13State of AI in 2026: LLMs, Coding, Scaling Laws, China, Agents, GPUs, AGI | Lex Fridman Podcast #490

Lex Fridman

This podcast directly addresses China's AI developments, LLM scaling approaches, and how they compare to Western models, providing crucial context for understanding the Chinese breakthrough's impact on LLM architecture.

10:25

10:25A New Kind of AI Is Emerging And Its Better Than LLMS?

TheAIGRID

This video explores emerging alternatives to traditional LLMs, which is essential for understanding how a Chinese breakthrough might represent a fundamentally different approach to building AI systems.

40:22

40:22China’s Next AI Shock Is Hardware

CNBC