The case for zero-error horizons in trustworthy LLMs

What it is

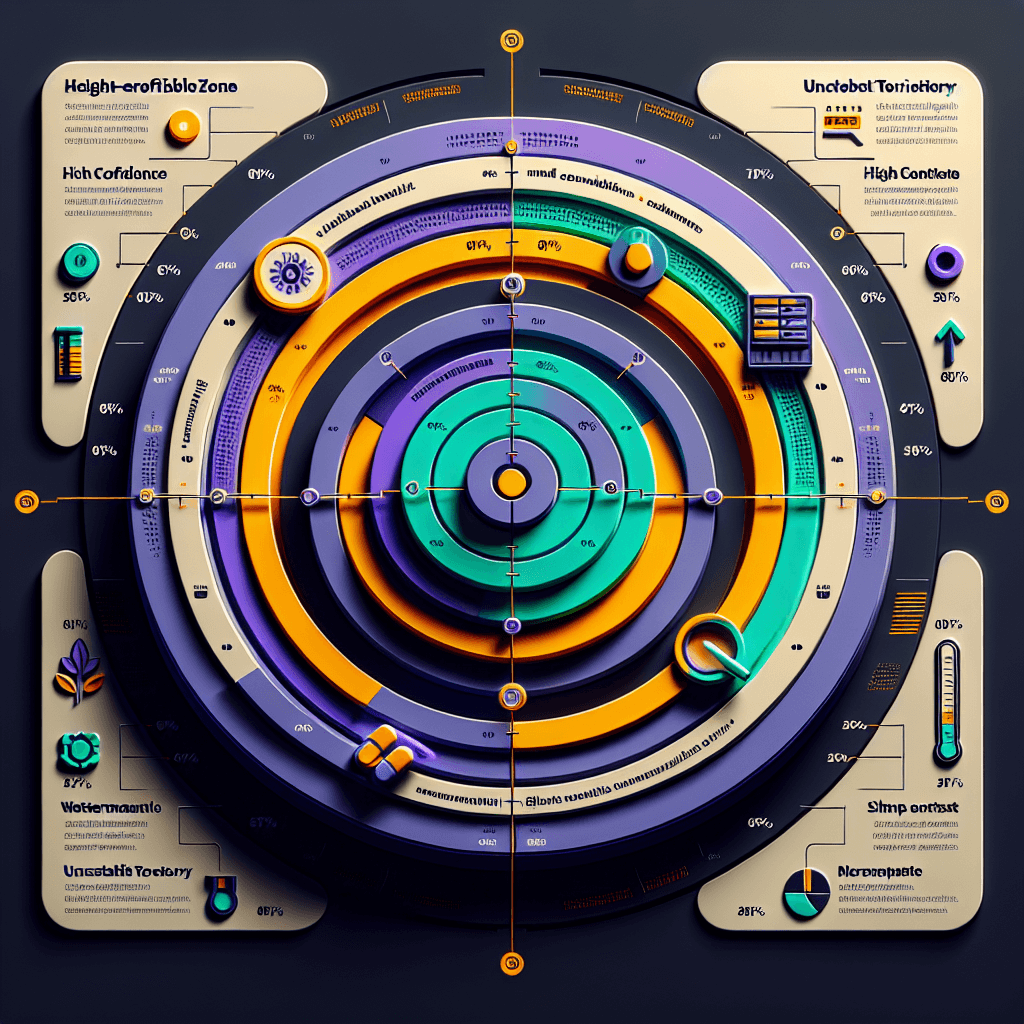

Zero-error horizons are bounded reliability zones for LLMs. Picture a target: the bullseye is tasks where the model guarantees zero mistakes, outer rings are where it admits uncertainty or refuses to answer. Instead of trying to be 95% accurate at everything, the model commits to 100% accuracy on a narrower scope — then clearly signals when you've moved outside that zone.

Why it matters

This matters if you're building AI into high-stakes workflows. Current models hallucinate confidently — bad for medical diagnosis, legal analysis, or financial advice. A zero-error horizon model would tell you "I can guarantee accuracy on basic drug interactions, but complex cases are outside my zone." It's honest reliability instead of probabilistic roulette. If you're evaluating AI vendors, start asking: what's your error-free boundary?

Key details

- •Paper published January 2025 on arxiv — academic proposal, not a shipping product

- •Applies to 'trustworthy LLM' research — models that need verifiable reliability, not entertainment chatbots

- •Core idea: trade breadth for guaranteed correctness in defined domains

- •Would require new training objectives that penalize confident errors more than admitting ignorance

- •Challenges existing 'scale solves everything' narrative — suggests architectural rethinking needed

Worth watching

12:35

12:35Even GPT-5.2 Can't Count to Five: The Case for Zero-Error Horizons in Trustworthy LLMs (Jan 2026)

AI Paper Slop

This video directly addresses the core topic with a specific case study (GPT-5.2's counting failures) that illustrates the fundamental challenges in achieving zero-error performance in LLMs.

0:05

0:05Agentic RAG vs RAGs

Rakesh Gohel

Agentic RAG systems represent a practical architectural approach to improving LLM reliability through retrieval augmentation, which is relevant to building more trustworthy and accurate LLM systems.

Video data provided by YouTube. Videos link to youtube.com.