The AI agent security checklist for IT

What it is

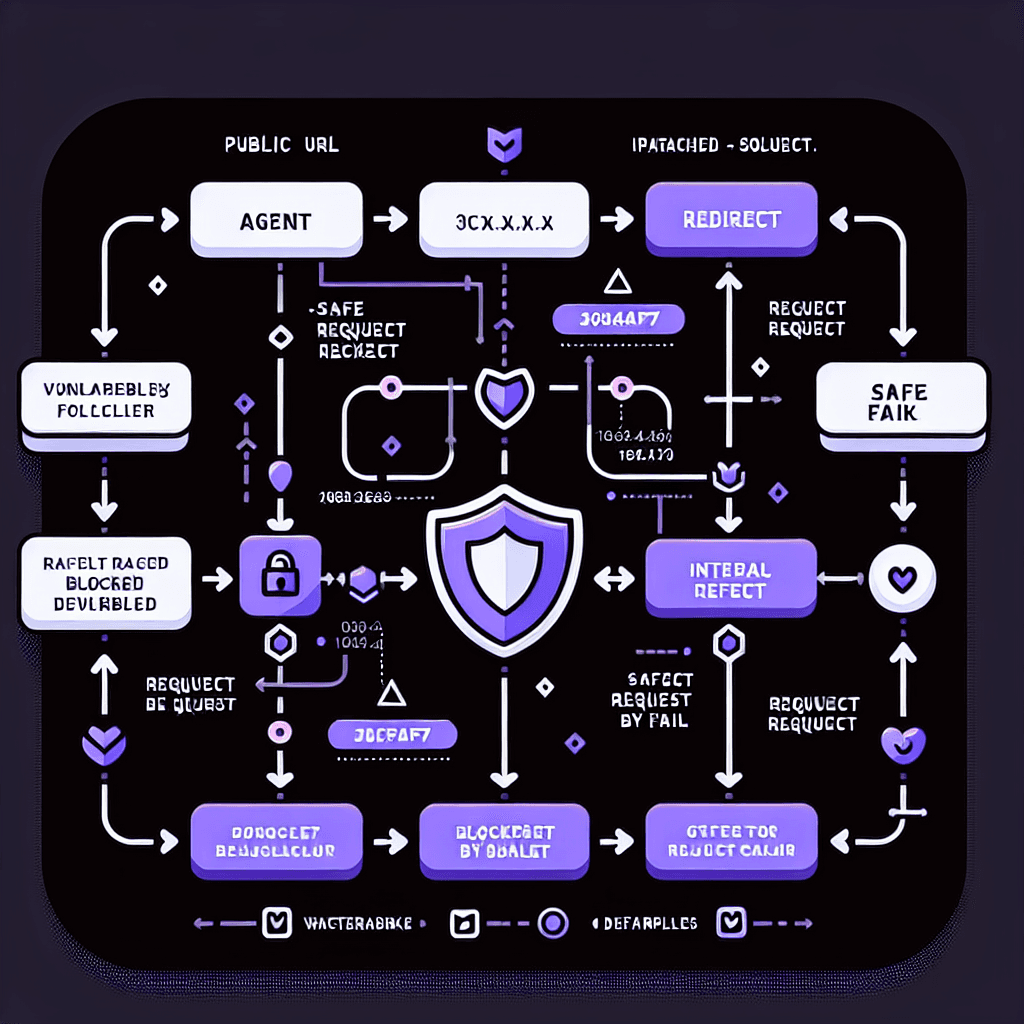

Server-Side Request Forgery (SSRF) via redirects is a classic attack: an AI agent fetches what looks like a safe public URL, but the server returns a 3xx redirect pointing at an internal address—think 192.168.x.x, localhost, or AWS metadata endpoints. The agent follows the redirect and leaks credentials or internal data. Open-WebUI's fix disables redirect-following across all HTTP client calls.

Why it matters

AI agents are fundamentally untrusted code—they execute LLM-generated commands, fetch arbitrary URLs, and run toolchains you didn't write. The Open-WebUI patch demonstrates the baseline security posture: default-deny on network, default-block on redirects, explicit allowlists only. If you run agents that touch internal networks or cloud metadata services, audit every HTTP call site and turn off redirect-following unless you have a specific, logged reason to allow it.

Key details

- •Open-WebUI v0.9.5 ships with AIOHTTP_CLIENT_ALLOW_REDIRECTS environment variable to block 3xx redirects

- •Affected surfaces: web fetch, image loading, OAuth discovery, tool server execution, code interpreter login

- •Attack vector blocked: public URL → 3xx redirect → RFC 1918 private IP or cloud metadata endpoint (169.254.169.254)

- •Security model: treat agents as untrusted code—default-deny file access, network egress, and redirect-following

- •PR #24491 on GitHub details the patch; released May 2025

Worth watching

8:47

8:47AI Model Penetration: Testing LLMs for Prompt Injection & Jailbreaks

IBM Technology

This IBM Technology video directly addresses security vulnerabilities in LLMs including prompt injection and jailbreaks, which are critical threat vectors that IT security teams must understand when deploying AI agents.

13:13

13:13How to Secure AI Business Models

IBM Technology

This IBM Technology video covers securing AI business models from a governance and risk perspective, providing IT professionals with frameworks for implementing security controls across AI agent deployments.

58:56

58:56Building AI Agents that actually work (Full Course)

Greg Isenberg

Understanding how to build functional AI agents is foundational to comprehending their security implications, and this full course provides the technical depth needed to identify potential attack surfaces and failure modes.