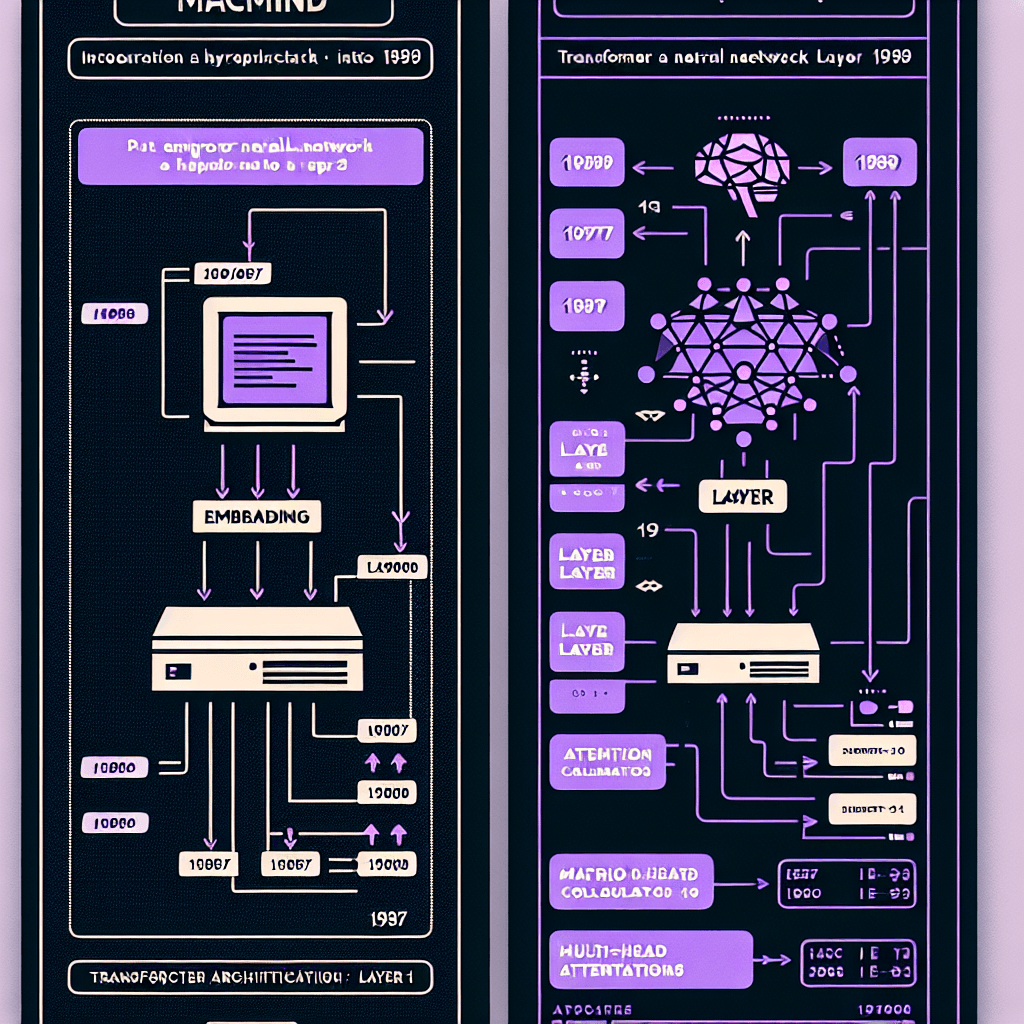

Show HN: MacMind – A transformer neural network in HyperCard on a 1989 Macintosh

What it is

MacMind is a fully functional transformer neural network written entirely in HyperTalk, the simple scripting language Apple shipped with HyperCard in 1987. Think of it as building a modern AI architecture using only the tools available when dial-up modems were cutting-edge. It includes all the core components: embedding layers, positional encoding, self-attention mechanisms, and backpropagation for training.

Why it matters

This isn't about performance—it's about understanding. By stripping transformers down to their fundamentals and implementing them in a language anyone can read (option-click any button to see the actual math), it makes the architecture tangible. If you've ever felt intimidated by transformer papers, seeing one work in 1989-era code is like watching a magic trick in slow motion. Plus, it's a reminder that the ideas matter more than the infrastructure.

Key details

- •1,216 total parameters—tiny by modern standards, but enough to learn simple patterns

- •Trained to perform bit-reversal permutations (a classic sequence-to-sequence task)

- •Runs on a 1989 Macintosh, though training is extremely slow

- •Full source code viewable in HyperCard's built-in script editor—no IDE required

- •Uses HyperTalk, a near-English scripting language designed for non-programmers