Show HN: Duplicate 3 layers in a 24B LLM, logical deduction .22→.76. No training

What it is

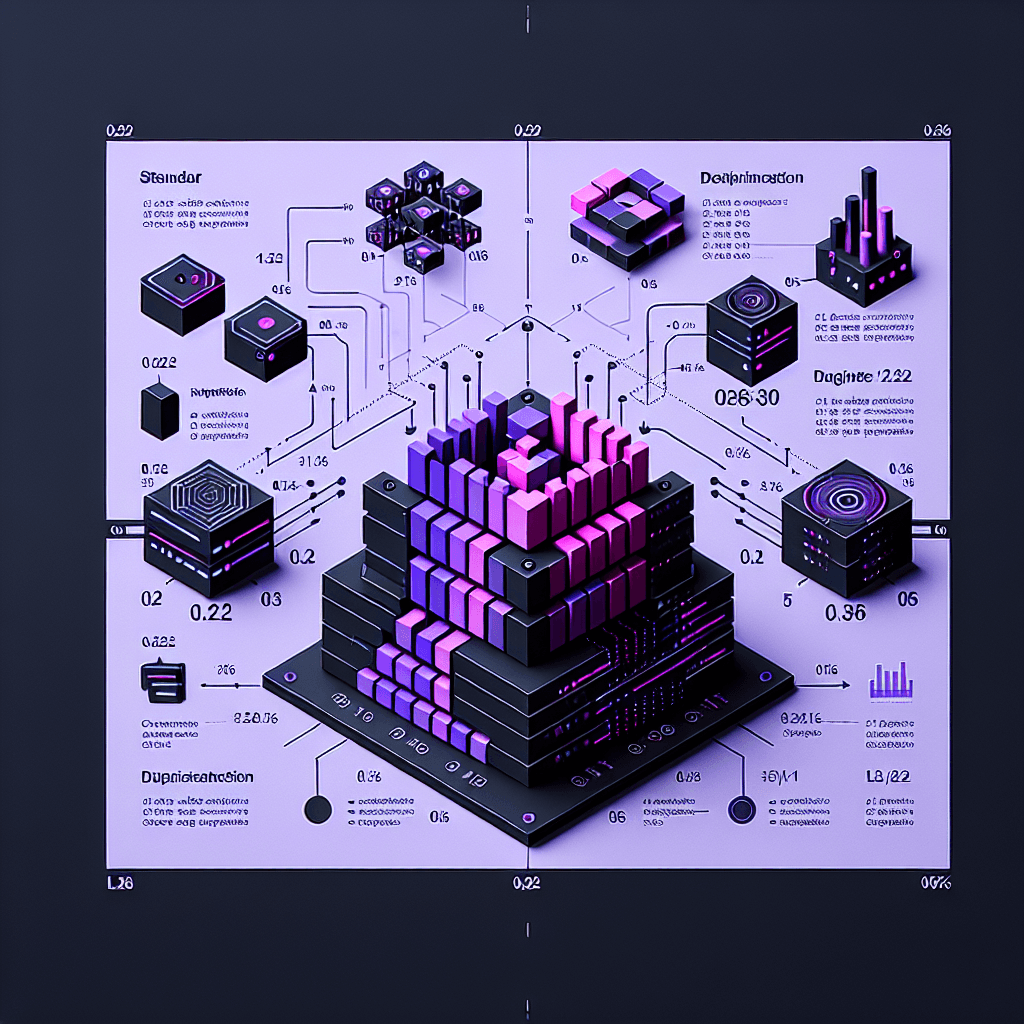

Picture a transformer model as a factory assembly line with 32 stations. Researchers found that stations 12-15 form a complete "reasoning module"—duplicate those four stations and products pass through twice, getting better quality output. This RYS (Recurrent Layer Stacking) method copies specific layer blocks in already-trained models, forcing the model to re-process information through the same reasoning circuit multiple times.

Why it matters

You can potentially upgrade your local LLMs for logic-heavy tasks without retraining or fine-tuning. If you're running inference on consumer hardware (like AMD RX 7900 XT), this technique could turn a mediocre reasoner into a capable one by editing the model architecture file. The catch: it's model-specific and requires finding which layers actually contain reasoning circuits—not every block works.

Key details

- •Tested on Qwen2.5-32B-Instruct, duplicating layers 12-15 increased SimpleQA logical deduction from 22% to 76% accuracy

- •Method involves zero training—just copy-paste specific transformer layers in the model config and re-run inference

- •Runs on consumer AMD GPUs (RX 7900 XT + RX 6950 XT), making it accessible outside NVIDIA ecosystems

- •Developer built llm-circuit-finder tool to identify which layer blocks contain reasoning circuits in different models

- •Based on David Ng's RYS research showing transformers have modular cognitive units, not smooth gradient processing