Show HN: 1-Bit Bonsai, the First Commercially Viable 1-Bit LLMs

What it is

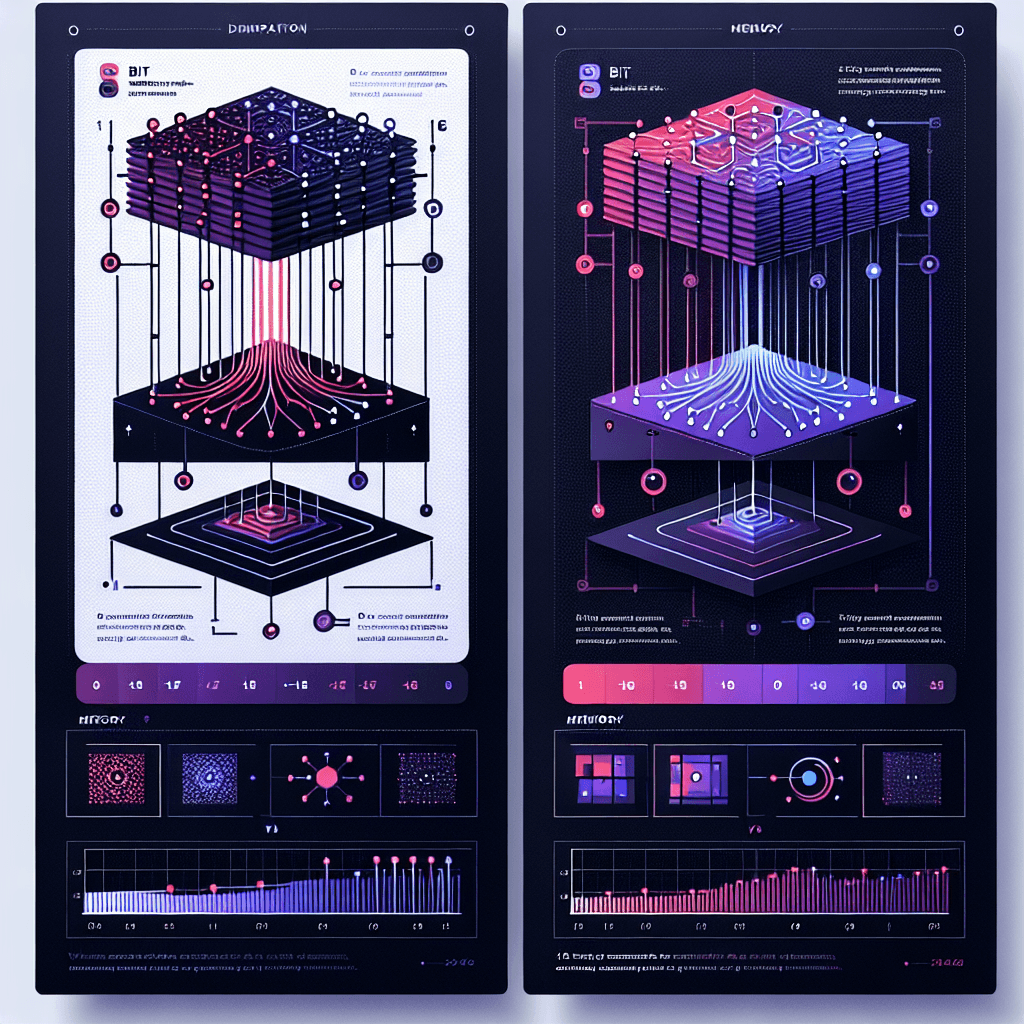

Standard LLMs store weights as 16-bit or 8-bit floating-point numbers. 1-Bit Bonsai compresses those weights to ternary values: negative one, zero, or positive one. Picture each neuron connection as a light switch with three positions instead of a dimmer knob with 65,536 settings. This collapses memory footprint and accelerates inference on hardware that prefers integer math.

Why it matters

If 1-bit models match quality benchmarks, you can run capable LLMs locally on laptops, phones, or IoT devices—no API fees, no latency, no data leaving your device. For developers, this means embedding intelligence in products that can't phone home. For users, it's privacy and speed. The catch: we need independent benchmarks to see if ternary weights actually preserve reasoning ability or if this trades brains for compactness.

Key details

- •PrismML positions this as the first commercially viable 1-bit LLM architecture, distinct from academic proofs-of-concept

- •Weights quantized to {-1, 0, 1} instead of standard 16-bit floats, reducing memory 16× in theory

- •No public benchmarks, pricing, model sizes, or comparison to Llama/GPT baselines yet published

- •Targeting edge deployment scenarios where cloud inference is impractical or expensive

- •Launch announced via Show HN; technical paper and downloadable weights not yet linked