Our eighth generation TPUs: two chips for the agentic era

What it is

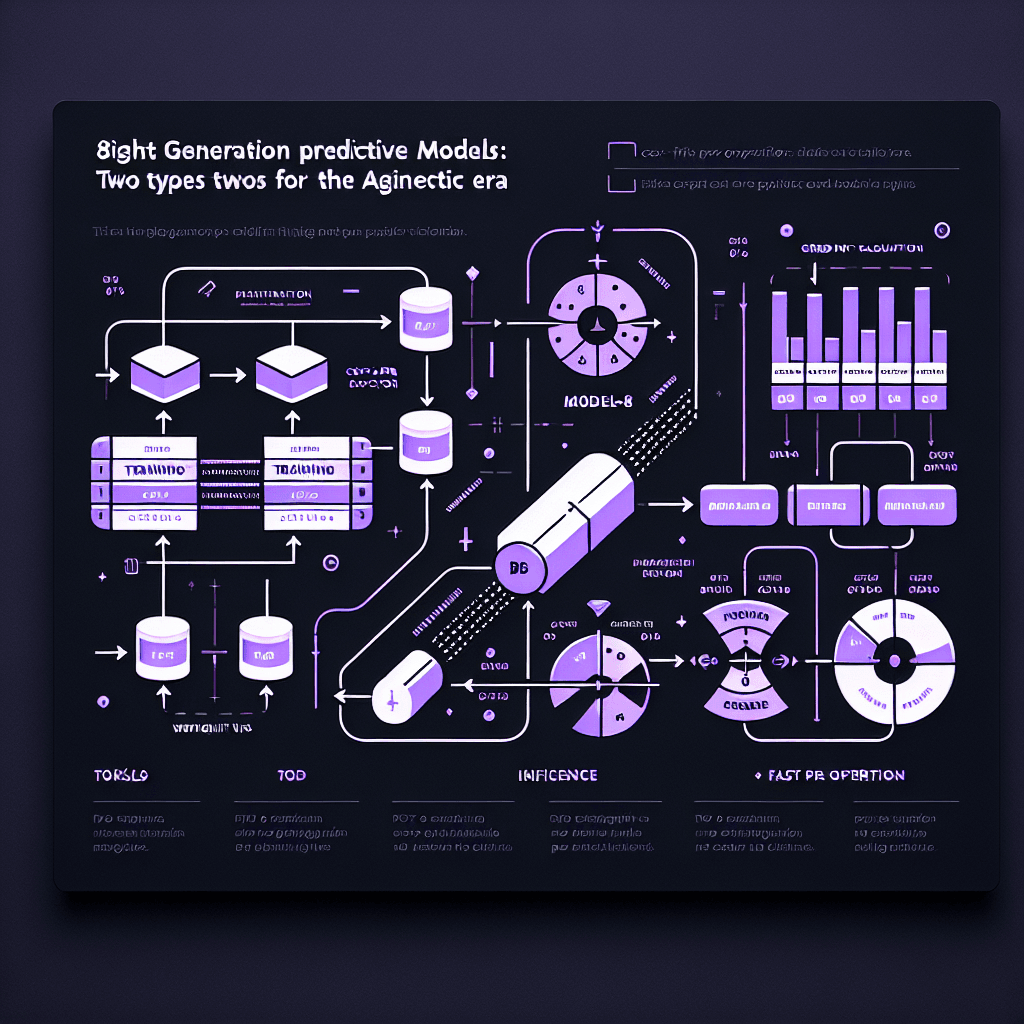

Picture a factory that used to make one all-purpose machine, now building two specialists. Google's TPU v8 splits into TPU-8T (training) and TPU-8i (inference). Training chips optimize for crunching gradients during model development. Inference chips maximize throughput when serving AI agents that need to respond instantly to millions of requests.

Why it matters

If you're building agentic AI — chatbots, coding assistants, reasoning chains — inference cost determines your unit economics. A 50% cost reduction on TPU-8i means you can serve twice the users at the same budget, or the same users at half the cost. For model builders, TPU-8T's 4× performance-per-dollar improvement makes experimenting with larger models feasible without burning through compute budgets.

Key details

- •TPU-8T: 4× better performance-per-dollar than TPU v5p for training workloads

- •TPU-8i: 50% lower cost-per-token than TPU v5e, optimized for high-throughput inference

- •Both available now on Google Cloud; pricing varies by region and commitment tier

- •Architecture divergence: training chips prioritize memory bandwidth, inference chips prioritize latency and batch processing

- •Google calls this the 'agentic era' — systems that continuously reason and act, not just respond to prompts

Worth watching

10:29

10:29Google Cloud Next ‘26 Keynote: Building the Agentic Enterprise (Every Major Announcement)

Google Cloud

This official Google Cloud keynote provides comprehensive coverage of the eighth generation TPUs and their role in the agentic enterprise platform announced at Google Cloud Next '26.

1:39:02

1:39:02Google Cloud Next '26 Opening Keynote

Google Cloud

The opening keynote directly addresses Google's latest TPU announcements and strategic positioning in the agentic AI era with detailed technical and business context.

45:41

45:41313 | Breaking Analysis | Google’s Agent Platform Takes Pole Position but Work Remains

SiliconANGLE theCUBE