LiteLLM Python package compromised by supply-chain attack

What it is

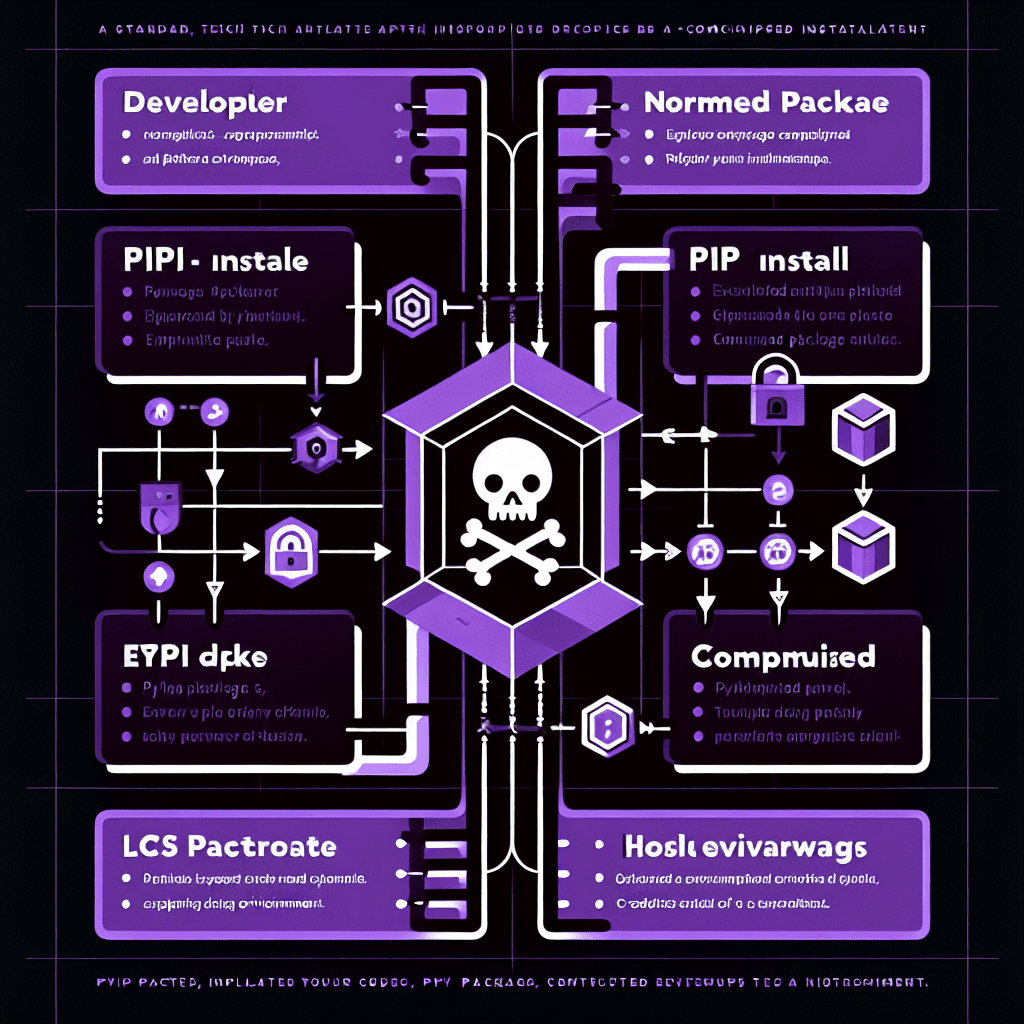

A supply-chain attack is when attackers compromise a software dependency that developers install automatically. Think of it like poisoning the water supply instead of knocking on individual doors. In this case, someone injected malicious code into LiteLLM—a Python package that acts as a universal translator and router for different LLM APIs (OpenAI, Anthropic, Cohere, etc.).

Why it matters

If you've installed or updated LiteLLM recently, your API keys and environment variables may have been exfiltrated. More broadly, this shows AI tooling is now high-value infrastructure. As everyone races to integrate LLMs, the dependencies we pull in become attack vectors. Pin your package versions, audit your supply chain, and rotate credentials if you use affected packages.

Key details

- •LiteLLM is a proxy layer that lets you call 100+ LLM providers with unified syntax

- •Attack reported via GitHub issue #24512 on the BerriAI/litellm repository

- •Compromised versions likely exfiltrated API keys stored in environment variables

- •Supply-chain attacks on Python packages have spiked 200%+ year-over-year as AI adoption grows

- •Standard mitigation: pin exact versions in requirements.txt, use lock files, rotate all credentials

Worth watching

1:54

1:54The LiteLLM Attack Explained: The Future of AI Supply Chain Risk

ZerberusAI

Provides comprehensive analysis of the attack mechanics and broader implications for AI supply chain security rather than just breaking news.

4:00

4:00The largest supply-chain attack ever…

Fireship

Fireship's reputation for clear, well-researched technical explanations suggests this will offer deeper context on how supply chain attacks work at a fundamental level.

4:49

4:49The LiteLLM Hack: A Chain of Compromised Trust | The LiteLLM Hack Explained in 5 Simple Steps 😱

CodeXray AI

The structured '5 Simple Steps' approach indicates this video breaks down the attack progression logically, making it easier to understand the full chain of compromise.