Kernel code removals driven by LLM-created security reports

What it is

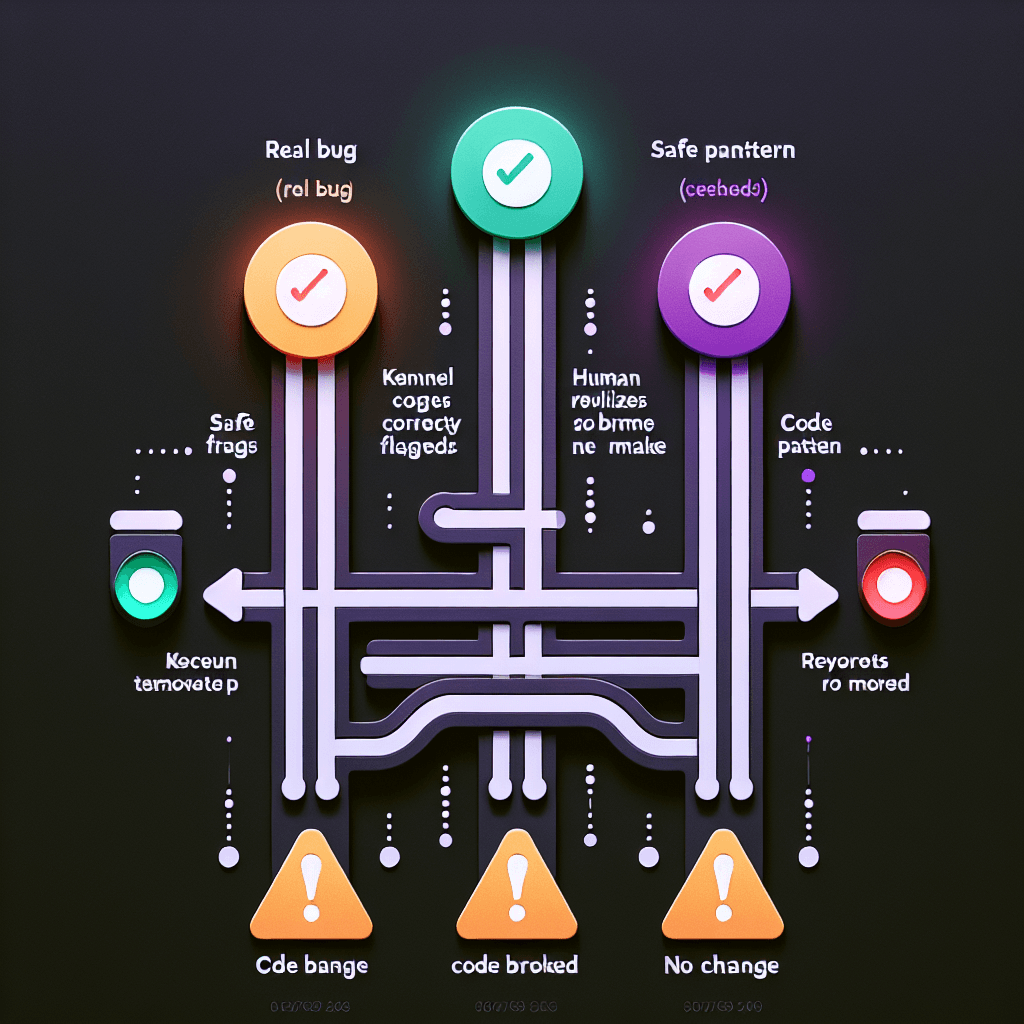

An LLM-based security scanner generated bug reports for the Linux kernel. Think of it as an AI intern who read security textbooks but doesn't understand context—it flags patterns that *look* like vulnerabilities without grasping when those patterns are actually safe. Maintainers reviewed these reports, agreed they were bugs, and deleted the code. Later reviews revealed the AI was wrong.

Why it matters

This is the canary in the coal mine for AI-assisted code review. If Linux kernel maintainers—some of the most experienced developers alive—can be fooled by confident-sounding AI reports, your team definitely can be. Before you let an LLM flag security issues in production code, understand it's pattern-matching, not reasoning. Always verify with someone who knows the codebase's idioms and intentional design choices.

Key details

- •LLM flagged legitimate code patterns as security vulnerabilities, producing false positives that looked credible

- •Kernel maintainers reviewed and approved the removals, then had to revert after discovering the mistakes

- •Demonstrates AI tools can't yet distinguish between genuine bugs and safe-but-unusual coding patterns

- •Exposes risk: automated security scanning without human expertise leads to removing working code

- •Linux kernel development now more cautious about AI-generated security reports