Model Launch3h ago

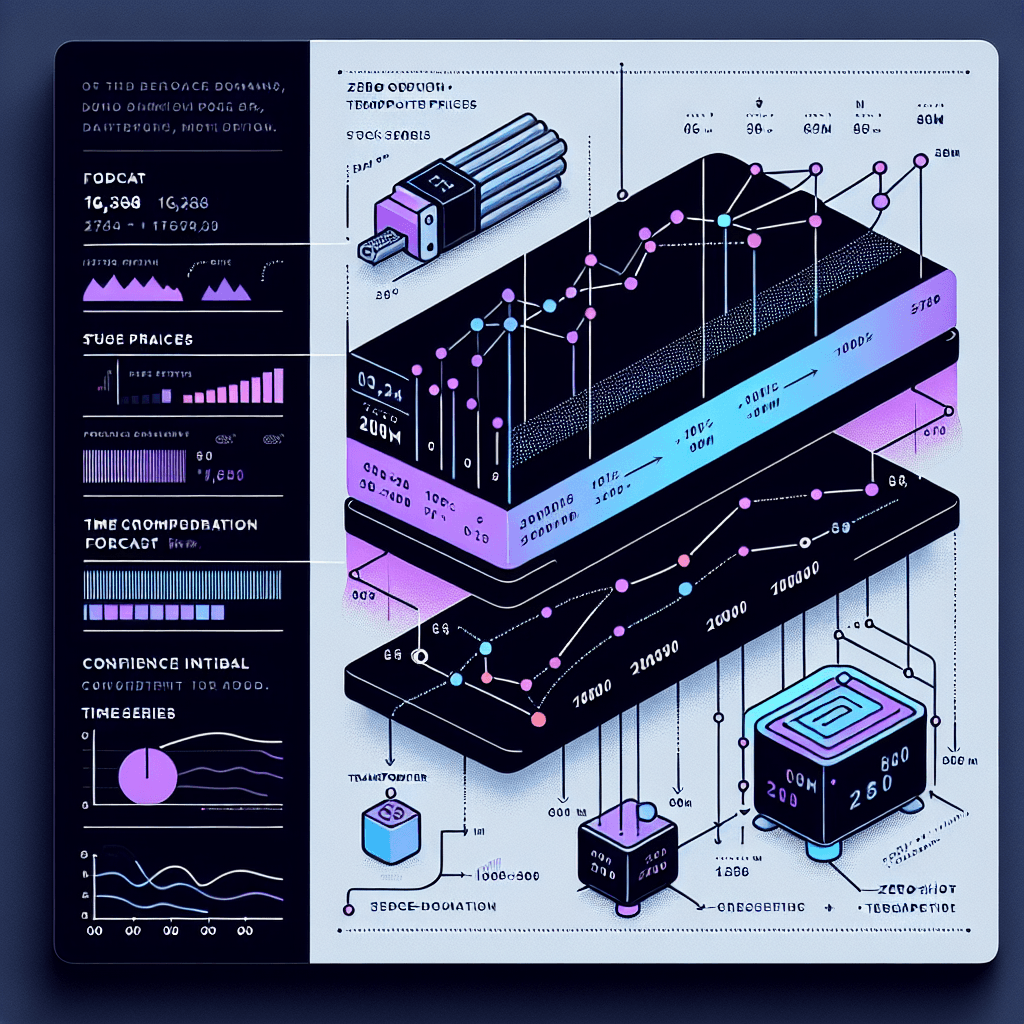

Google's 200M-parameter time-series foundation model with 16k context

What it is

TimesFM is a foundation model that predicts future values in time-series data—think stock prices, sensor readings, or server metrics. Instead of training a custom model for each dataset, you feed it historical numbers and it forecasts what comes next. The 16k context window means it can digest roughly 16,000 past data points to make predictions.

Why it matters

This matters if you work with time-series data but lack ML expertise or training resources. Previously, accurate forecasting required domain-specific models and labeled data. TimesFM works zero-shot—no training, just inference. If you're monitoring metrics, predicting demand, or analyzing trends, you now have a pre-trained model that rivals custom solutions without the setup cost.

Key details

- •200 million parameters—smaller than LLMs but purpose-built for numerical sequences

- •Context window of 16,384 timestamps handles long-range dependencies in data

- •Pre-trained on 100 billion data points from Google's internal datasets plus synthetic data

- •Zero-shot inference: works across domains without fine-tuning on your specific data

- •Open-sourced on GitHub under google-research/timesfm—available for experimentation now