CrabTrap: An LLM-as-a-judge HTTP proxy to secure agents in production

What it is

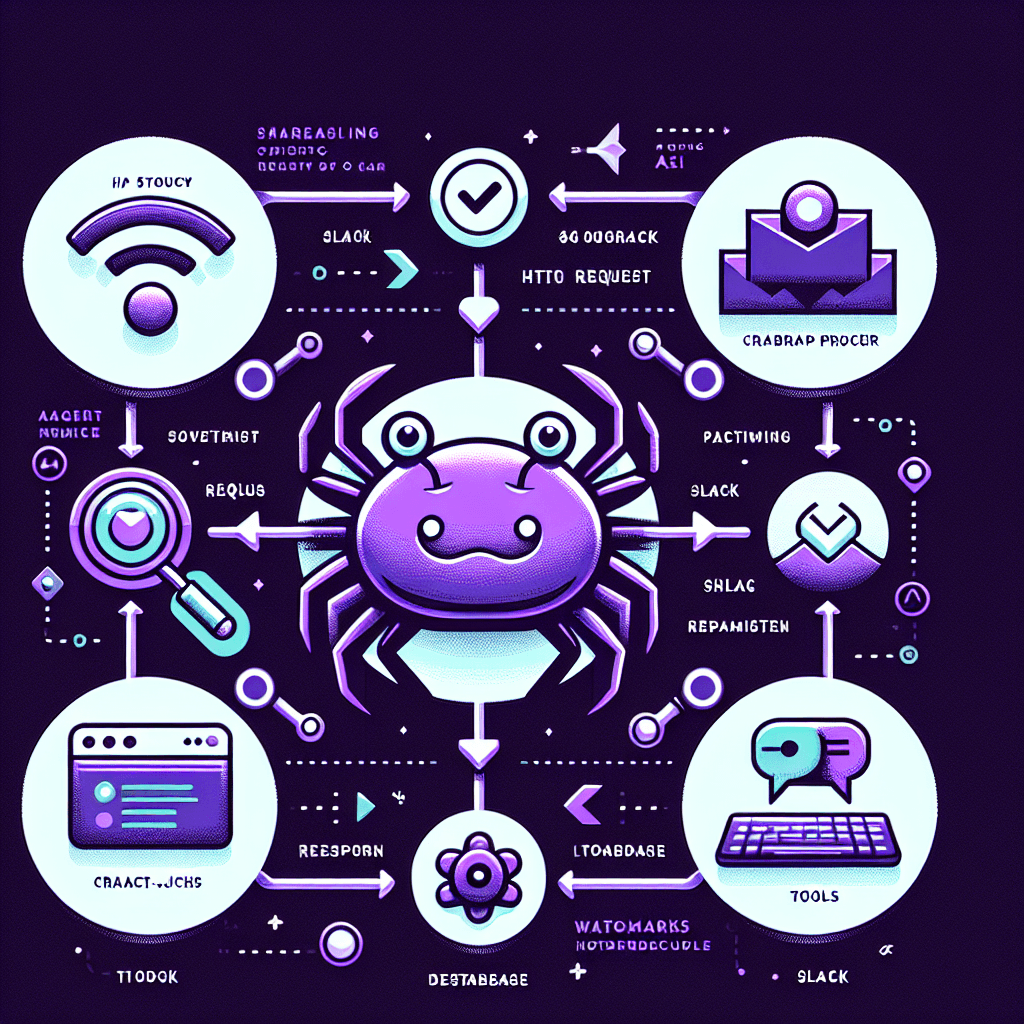

CrabTrap sits between your AI agent and the tools it calls. Picture a checkpoint: every HTTP request your agent makes passes through a proxy where a second LLM evaluates whether the action is safe. If the judge LLM spots something risky—like a data deletion or privilege escalation—it blocks the request before it executes. You get agent guardrails without changing your application code.

Why it matters

Production AI agents are moving from demos to real systems with real consequences. You need a way to prevent catastrophic mistakes without hardcoding every edge case or slowing down iteration. CrabTrap gives you a safety layer that's transparent, pluggable, and doesn't require rebuilding your agent from scratch. If you're running agents that touch sensitive operations, this is your rollback plan.

Key details

- •Open-sourced by Brex for securing production AI agents

- •Works as an HTTP proxy—intercepts requests between agent and tools

- •Uses LLM-as-a-judge pattern: a second model evaluates each tool call for safety

- •Blocks risky actions (deletions, privilege changes) before execution

- •Zero code changes to your agent—just route traffic through the proxy