Cloudflare's AI Platform: an inference layer designed for agents

What it is

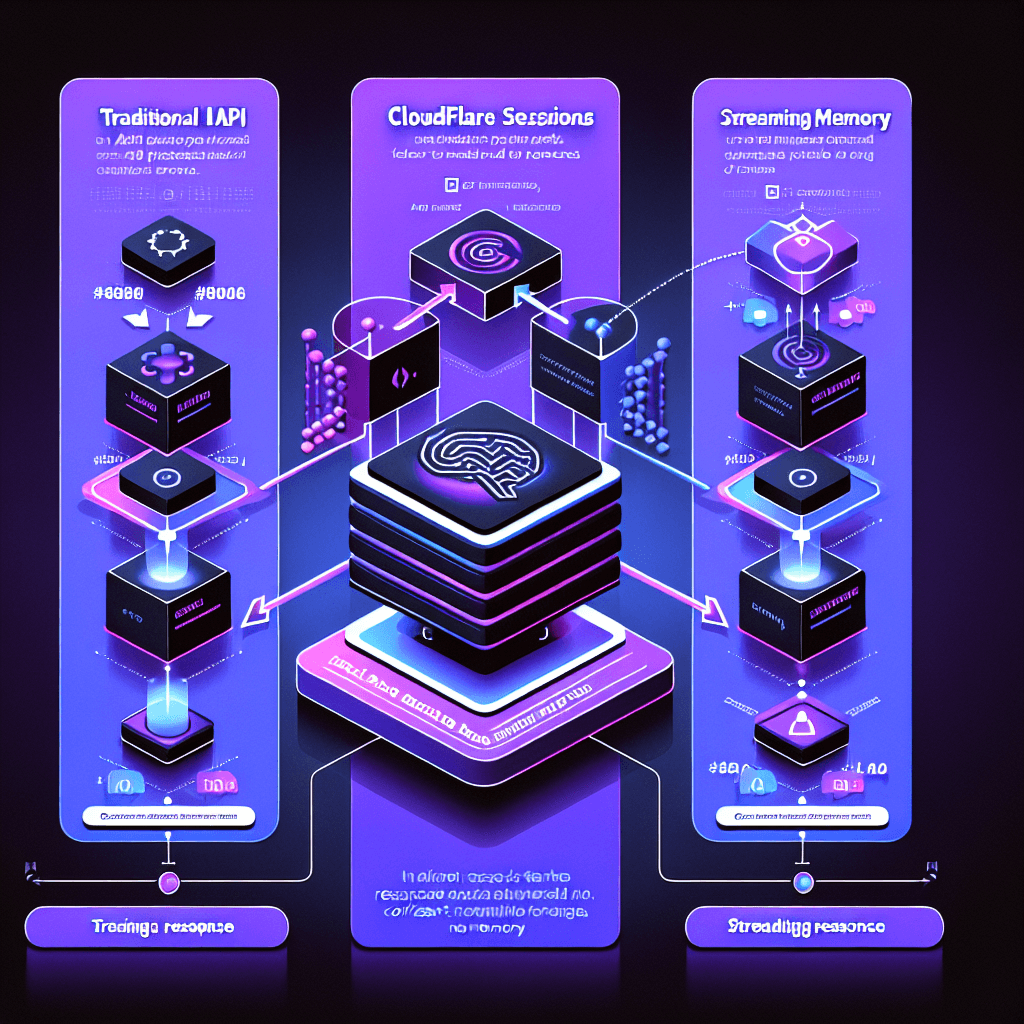

Think of it as stateful inference for AI agents. Most LLM APIs treat each request as isolated — you lose context between calls. Cloudflare's new Workers AI platform gives agents persistent memory (sessions) and lets them stream tool calls mid-response. Picture an agent that remembers your last five interactions and can call external APIs without finishing its entire response first.

Why it matters

If you're building agents that do real work (not chatbots), this matters. Traditional inference platforms force you to rebuild context on every request or manage state yourself. Cloudflare handles session persistence and runs on their edge network for lower latency. The Agent SDK includes MCP (Model Context Protocol) support, so agents can natively connect to tools like Brave Search or GitHub without custom wrappers. Trade-off: you're locked into their platform, but you skip the infrastructure headache.

Key details

- •Sessions API maintains conversation context across requests — no manual state management required

- •Streaming tool calls: agents can invoke functions mid-response instead of waiting for full completion

- •MCP server integration built-in — connect to Brave Search, Filesystem, GitHub, Slack, and others without custom code

- •Pricing: Llama 3.1 8B at $0.011/1M tokens, Llama 3.3 70B at $0.054/1M tokens (input rates shown)

- •Agent SDK available now in JavaScript/TypeScript — Python support coming soon

Worth watching

30:10

30:10Cloudflare Agents 101 - Deploy your first AI agent

Cloudflare Developers

This official Cloudflare Developers tutorial provides hands-on guidance for deploying your first AI agent on their platform, making it essential for practical implementation.

7:14

7:14Cloudflare Just Made AI Agents WAY Easier (Agents SDK)

Better Stack

This video explains how Cloudflare's Agents SDK simplifies AI agent development, giving you a clear understanding of the platform's core capabilities and ease of use.

27:30

27:30Moltworker (for OpenClaw) & Markdown for Agents: Running AI on Cloudflare

Cloudflare

This deep dive covers running AI agents on Cloudflare infrastructure using Moltworker and explores the technical architecture behind their inference layer platform.