Tool Update1d ago

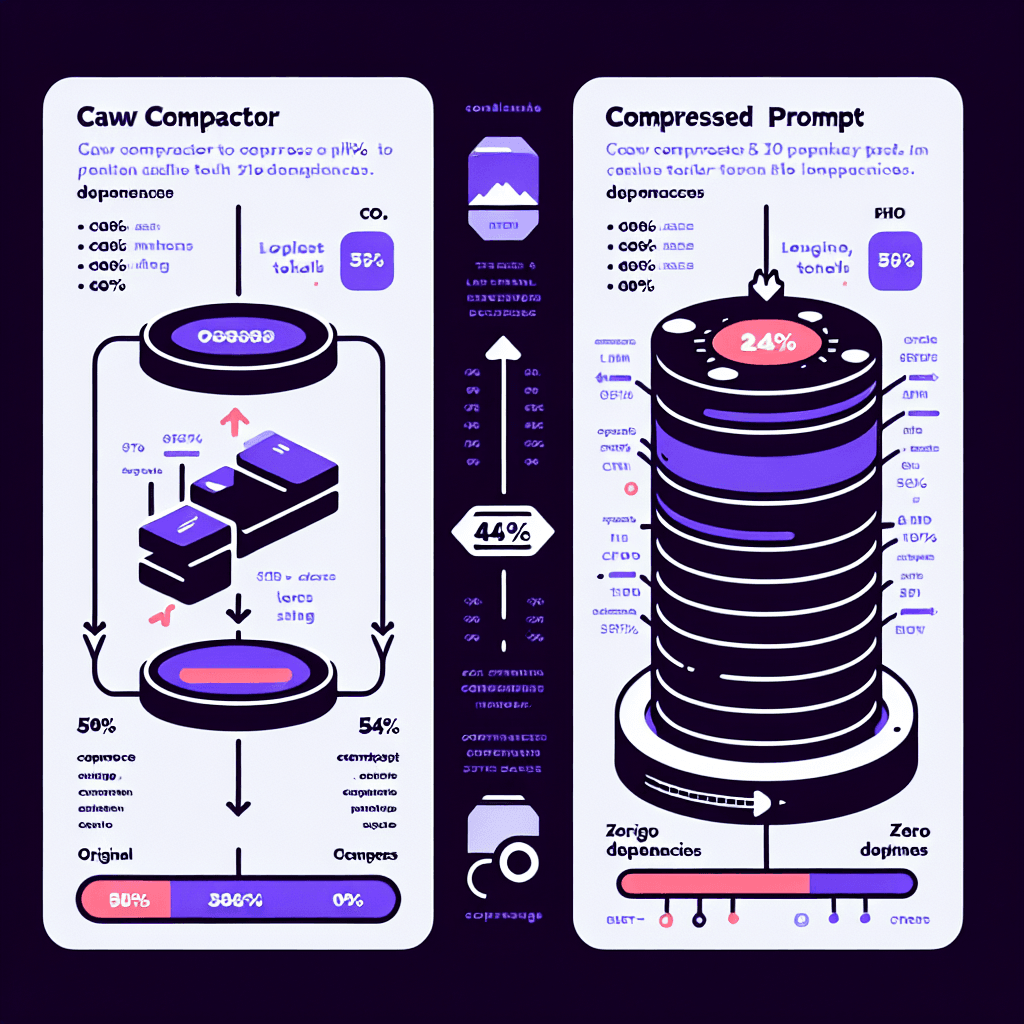

Claw Compactor: compress LLM tokens 54% with zero dependencies

What it is

Picture a smart zipper for your prompts. Claw Compactor analyzes text you're about to send to an LLM and compresses it by removing redundancy, optimizing structure, and condensing verbosity—all while preserving meaning. The LLM receives a smaller payload, processes it faster, and you pay less per call.

Why it matters

If you're building with GPT-4, Claude, or similar models, you're probably bumping into context window limits or watching API costs climb. This tool sits between you and the API—no server setup, no API keys to manage. Run it locally, compress your inputs, and immediately cut costs. Especially valuable for RAG systems where you're stuffing documents into prompts.

Key details

- •54% average token reduction across typical prompts

- •Zero dependencies—pure implementation you can audit and trust

- •Client-side compression means your data never leaves your machine

- •Open-source on GitHub under open-compress organization

- •Works with any LLM API that accepts text input