Policy2h ago

2% of ICML papers desk rejected because the authors used LLM in their reviews

What it is

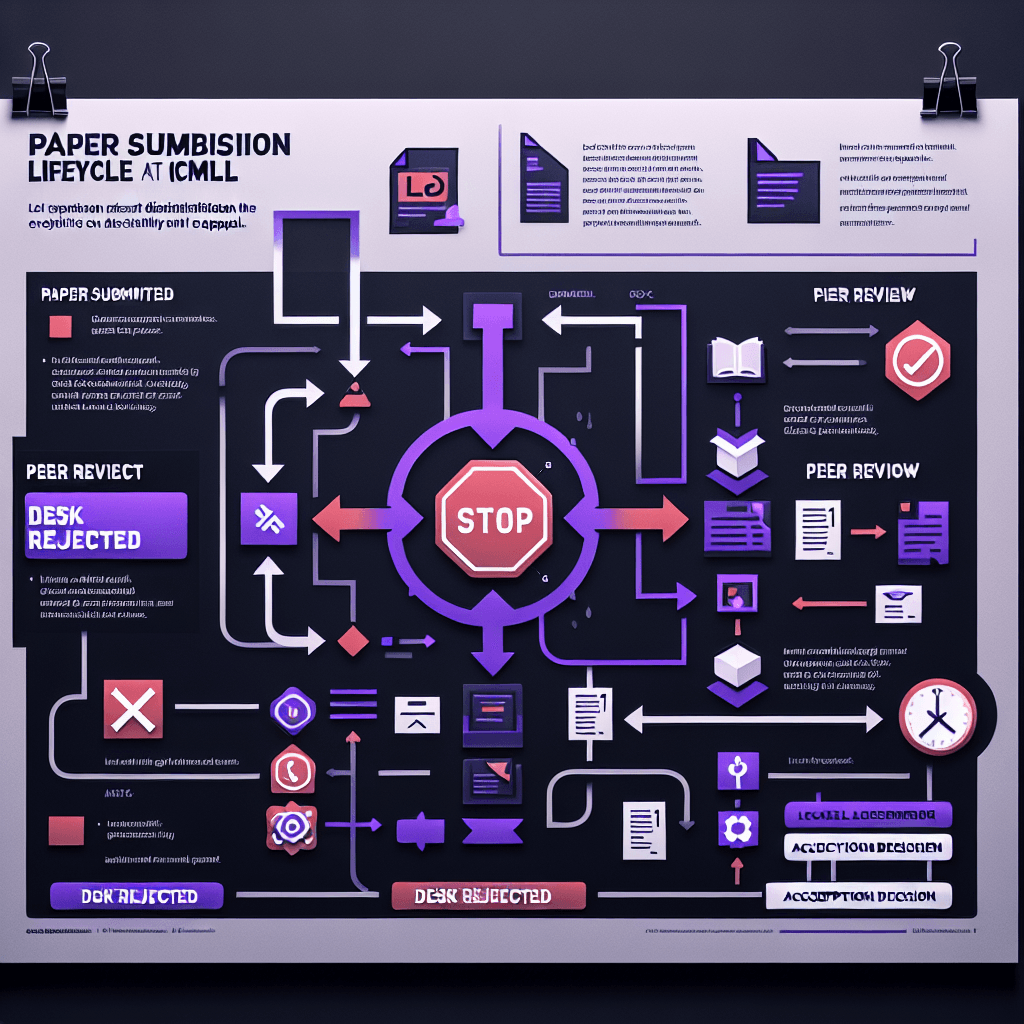

ICML (International Conference on Machine Learning) set a policy: you can't use LLMs to write peer reviews. If you do—and they catch you—your submission gets desk-rejected. That means your paper is out before anyone reads it. Think of it as an automatic disqualification, like getting red-carded before the match starts.

Why it matters

If you're submitting to ML conferences, enforcement is real now—not theoretical. ICML just proved they'll actually reject papers for LLM use in reviews, even if the research itself is solid. For reviewers: don't lean on ChatGPT for your critique. For authors: the stakes just got clearer. Academic integrity rules are evolving faster than the tools themselves.

Key details

- •2% of ICML 2026 submissions were desk-rejected specifically for LLM policy violations during peer review

- •Desk rejection means papers were removed before review—authors can't appeal or revise

- •ICML's policy bans using LLMs to draft or substantially edit peer reviews, not just generate them wholesale

- •This is one of the first major enforcement actions at a top-tier ML conference

- •The blog post suggests detection methods exist but doesn't detail how violations were identified