2% of ICML papers desk rejected because the authors used LLM in their reviews

What it is

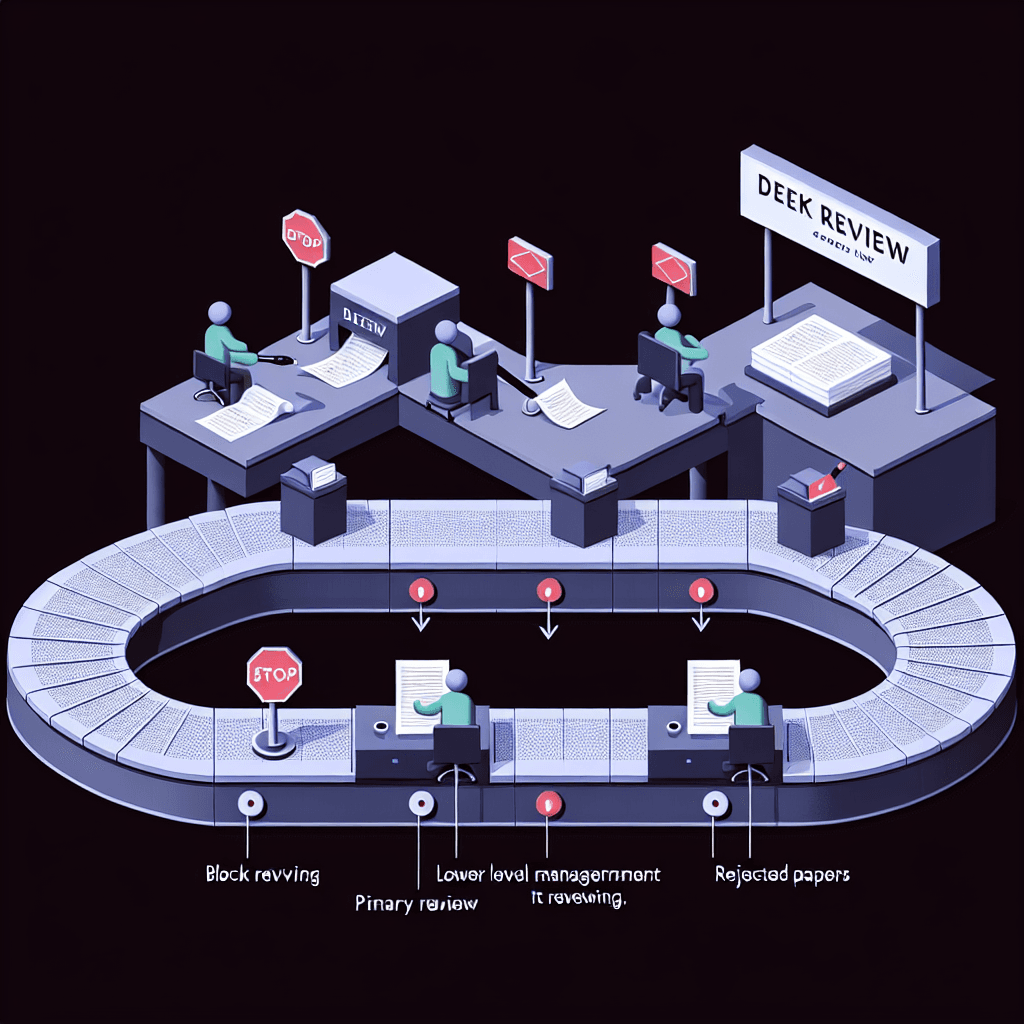

Picture the peer review process as a quality gate: experts read papers and write detailed critiques before publication. ICML discovered that some authors, when asked to review other submissions, fed papers into ChatGPT or similar tools to generate their reviews instead of writing them. The conference caught this and rejected those authors' own papers before they even entered full review.

Why it matters

If you're publishing in AI/ML, this is your new reality check. Conferences are now actively detecting LLM-generated reviews and enforcing consequences that affect your own work. This also signals that the research community values human judgment in peer review enough to police it — even at venues where everyone builds LLMs. If you review papers, know that lazy AI-assisted reviews now carry career risk.

Key details

- •ICML 2026 policy: explicitly prohibits using LLMs to generate any part of peer reviews

- •Enforcement: desk rejection (paper never enters full review process, immediate disqualification)

- •Detection rate: caught 2% of all submitted papers — likely through language patterns, review quality flags, or author self-disclosure

- •Timing: March 2026 announcement, affecting one of the top-tier ML conferences

- •Precedent: first large-scale public enforcement action against LLM-assisted peer review at a major AI venue

Worth watching

Video data provided by YouTube. Videos link to youtube.com.